Introduction to IBM Data Engineer

“Where there is data smoke, there is business fire.” — Thomas Redman

In today’s world, data may be used for a variety of purposes, from tailoring marketing campaigns to powering self-driving automobiles. Data scientists are in charge of evaluating data and putting it to use for diverse objectives.

However, they require high-quality data to do difficult tasks such as projecting business trends. That’s where data scientists come in. Data engineering is the science of gathering and verifying information (data) for use by data scientists.

Data engineering may be a lucrative career option due to its high demand and strong pay range. However, before embarking on a career as a data engineer, you should be aware of the following.

Data engineers design and manage the data infrastructures that power commercial information systems and applications. They may deal with something tiny, like as a relational database for a small firm, or something large, such as a petabyte-scale data lake for a Fortune 500 corporation.

Data engineers are responsible for designing, building, and installing data systems. These technologies power machine learning and artificial intelligence analytics. They also create information procedures for a variety of data jobs. These include, among other things, data collecting, data transformation, and data modeling.

There are several paths to becoming a data engineer, whether you study at a university or on your own.

About IBM

Most of us have surely seen or owned an IBM Computer in the earlier years. IBM specialized in mainframe computers, which were costly medium- to large-scale computers capable of processing numerical data at high rates. The IBM Personal Computer debuted in 1981 when the corporation entered the booming market for personal computers.

Despite capturing a sizable market share, IBM was unable to maintain its traditional supremacy as a manufacturer of personal computers. New semiconductor-chip–based technologies were shrinking and simplifying computer manufacturing, allowing smaller businesses to enter the market and capitalize on new advances such as workstations, computer networks, and computer graphics.

IBM provides a wide range of goods and services, including software, consulting, and hybrid cloud infrastructure. The firm also offers its clients financial services to help them purchase information technology (IT) systems, software, and services. The company serves customers in over 175 countries and competes with hundreds of enterprises ranging from tiny businesses to major multinational organizations.

In 2020, IBM earned about 73 billion US dollars in global sales, with the Americas accounting for roughly half of this. In 2020, IBM’s research and development spending will total $6.33 billion US dollars as the company continues to innovate, with an emphasis on AI and hybrid cloud technologies.

The Interview Process- IBM Data Engineer The IBM data engineer interviews are designed differently for fresh graduates and experienced applicants, with distinct expectations.

The IBM data engineer interviews are designed differently for fresh graduates and experienced applicants, with distinct expectations.

Freshers are tested in the following areas during the IBM Data Engineer interview process:

- Ability to think

- Language in English

- Learning flexibility

- Coding expertise

For experienced applicants, the IBM Data Engineer interview process is substantially more specialized and focused on the subject of expertise. The procedure consists of the following stages:

- Interview over the phone

- Language testing that is objective

- Logical thinking

- Interview for technical positions

- Interview based on behavior

For experienced individuals, the interview procedure at IBM data engineer interview process is shorter than for recent graduates or entry-level roles. Before you begin your IBM Data Engineer interview preparation, become acquainted with all of the interview rounds that the IBM data engineer interview process comprises in order to develop an efficient preparation approach.

The following are the steps in the IBM data engineer interview process.

Step-1 for IBM Data Engineer Interview : Application – The first step is to fill out the job application on the company’s official website. You may also join their talent network to remain up to know on new possibilities and stay in touch with the organization.

Step-2 for IBM Data Engineer Interview: Application screening – Your application is then reviewed by specialists who decide whether or not you should move on to the next level.

Step-3 for IBM Data Engineer Interview: Online evaluation – The next phase is an online evaluation of the candidate. Depending on the function, this round may contain one or more of the following assessments: Cognitive Ability Assessment, Coding Assessment, Video Assessment, and English Language Assessment. There are no roles that require you to complete all four assessments.

Step-4 for IBM Data Engineer Interview: Interview/assessment center – If you pass the online examinations, you’ll be invited to a personal interview, which is divided into two rounds: technical and HR.

Please keep in mind that, because of the ongoing epidemic, all rounds of interviews will be performed online.

The interview procedure for IBM data engineer differs depending on the position and the interviewing panel. If the organization is in desperate need of software engineers or developers, the onboarding process might be exceedingly short. In most cases, the interview process might last up to two weeks, if not longer. There is no set period for receiving the company’s offer letter.

Once your application has been shortlisted, the IBM Data engineer interview procedure is divided into two stages. Let’s have a look at what each one implies.

Also read: Data Analyst at Amazon

Part 1: Online Evaluation for IBM Data Engineer

IBM’s online testing includes topics such as Quantitative Aptitude, Number Series, and English Language. This test consists of 72 questions and lasts 100 minutes.

The English language test comprises vocabulary, grammar, and comprehension problems. This round should be easy if you have a basic knowledge of high school principles. The questions are multiple-choice with no negative marking.

Candidates who pass this step are invited to an interview, which is often held in IBM assessment centers. This round can only be cracked with a lot of practice.

Take online mock aptitude exams to ensure you’re well prepared for this stage. Practice puzzles and other logical thinking questions as well for this round. You may easily pass IBM’s online examinations if you have a basic comprehension of th

e subjects and good time management abilities.

Part 2: Technical and Human Resources Interviews for IBM Data Engineer

Candidates who pass the online examinations are generally invited to an onsite technical and HR interview stage. IBM data engineer’s technical round is identical to that of the FAANG businesses (Facebook, Amazon, Apple, Netflix, and Google).

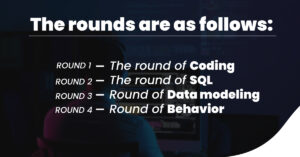

You must have a good foundational grasp of your domain subjects to pass IBM data engineer’s technical round. The technical interview contains three to four rounds, and interviews take place throughout one full day. The rounds are as follows:

- The round of coding

- The round of SQL

- Round of data modeling

- Round of behavior

Each of these rounds might last between 20 and 30 minutes. During the on-site interview, your ability to tackle complicated, difficult situations analytically are thoroughly examined.

The next stage after the technical interview for IBM Data Engineer is the HR interview. This round will include questions on your career objectives and aspirations, as well as a test of your communication abilities. The interviewer may also discuss your accomplishments and other information from your résumé.

You’d be expected to introduce yourself and discuss your skills and flaws. This round will put your personality to the test. Make sure you’re ready for any curveballs the interviewer may throw your way. This round is designed to determine whether you’d be a suitable match for the company’s culture.

IBM also has a webpage to educate candidates about their recruitment process. You may check it out here to know more about IBM Data Engineer.

Interview Questions- IBM Data Engineer

Before going ahead and discussing the different types of interview questions that may be asked in your IBM Data Engineer Interviews, we’ve made sure to categorize this segment into three, Basic, Intermediate, and Advanced. This should help freshers and experienced candidates alike.

Also Read: Google Data Analyst

Basic Questions – IBM Data Engineer

- What exactly is Data Engineering?

When dealing with data, the term “Data Engineering” is used. Data Engineering is the primary process of transforming a raw piece of data into valuable information that can be used for a variety of reasons. This entails the Data Engineer working with data by undertaking data collecting and study.

- Define the term “Data Modeling.”

Data modeling is the process of simplifying complex software ideas by breaking them down into basic diagrams that are easy to grasp, and it has no prerequisites. There is a simple visual representation between the data objects involved and the rules associated with them, which gives several benefits.

- What are some of the design schemas that are utilized in Data Modeling?

When working with data modeling, there are two schemas. They are as follows:

- Schema of stars

- Snowflake pattern

- What exactly is Hadoop? Briefly explain.

Hadoop is an open-source platform for data manipulation and storage, as well as executing applications on units known as clusters. When it comes to working with and handling Big Data, Hadoop has long been the gold standard.

The key advantage is the simple provision of massive amounts of storage space and massive amounts of computing capacity to handle endless jobs and tasks concurrently.

- What are some of the most significant Hadoop components?

When dealing with Hadoop, there are numerous components to consider, some of them are as follows:

Hadoop Common: This is a collection of all libraries and utilities utilized by the Hadoop application.

When working with Hadoop, all data is stored in the Hadoop File System (HDFS). It provides an extremely high bandwidth distributed file system.

Hadoop YARN: Yet Another Resource Negotiator is a resource manager in the Hadoop system. YARN can also be used for task scheduling.

Hadoop MapReduce is a technology that allows users to access large-scale data processing.

- What exactly is a NameNode in HDFS?

NameNode is an essential component of HDFS. It is used to store all of the HDFS data while also keeping track of the files in all clusters.

However, you should be aware that the data is kept in the DataNodes rather than the NameNodes.

- What exactly is Hadoop Streaming?

Hadoop streaming is one of Hadoop’s most popular utilities, allowing users to quickly generate maps and conduct reduction operations. This can then be sent to a specific cluster for use.

- What are some of Hadoop’s key characteristics?

- Hadoop is a free and open-source framework.

- Hadoop is based on distributed computing.

- Parallel computing allows for faster data processing.

- Data is stored in clusters distinct from the operations.

- To avoid data loss, data redundancy is prioritized.

Also Read: TCS Data Analyst

- What is the difference between Block and Block Scanner in HDFS?

The smallest factor is called a block, which is a single entity of data. When Hadoop comes across a huge file, it automatically divides it into smaller sections known as blocks.

A block scanner is installed to ensure that the loss-of-blocks created by Hadoop are successfully installed on the DataNode.

Intermediate Questions for IBM Data Engineer:

- What are some of Reducer’s methods?

The three primary methods involved with reducer are as follows:

- setup() is used to configure input data settings and cache protocols.

- cleanup(): This method is used to remove temporary files that have been stored.

- reduce(): This method is called once for each key and is the single most significant aspect of the reducer as a whole.

- What are the various Hadoop usage modes?

Hadoop can be utilized in three distinct ways. They are as follows:

- Independent mode

- Mode of pseudo-distribution

- Mode of complete distribution

- How does Hadoop maintain data security?

Some of the steps required in safeguarding data in Hadoop are as follows:

- To begin, you must secure the authentic channel that connects clients to the server.

- Second, the clients utilize the received stamp to request a service ticket.

- Finally, the service ticket is used by the clients to authenticate their connection to the related server.

What are the default port numbers in Hadoop for Port Tracker, Task Tracker, and NameNode?

- The default port for Job Tracker is 50030.

- The default port for Task Tracker is 50060.

- The default port for NameNode is 50070.

- How does Big Data Analytics assist a company to enhance its revenue?

Data analytics benefits today’s businesses in a variety of ways. The following are the fundamental notions that it aids:

- Data can be used effectively to link to organized growth.

- Analysis of effective customer value increase and retention

- Improved staffing procedures and workforce forecasting

- Significantly lowering production costs

- What, in your opinion, is the primary function of a Data Engineer?

A Data Engineer is in charge of a variety of tasks. Some of the most important are as follows:

- Handling data intake and pipeline processing

- Maintaining data staging locations and being in charge of ETL data transformation activities

- Performing data cleaning and redundancy removal

- Developing ad hoc query construction processes and native data extraction methodologies

- What are some of the technologies and abilities required of a Data Engineer?

The following are the key technologies that a Data Engineer must be familiar with:

- Arithmetic (probability and linear algebra)

- Statistics in summary

- R and SAS programming languages for machine learning

Advanced Questions for IBM Data Engineer:

- What are the different components of the Hive data model?

The following are some of Hive’s components:

- Buckets

- Tables

- Partitions

- Is it possible to make more than one table for a single data file?

Yes, several tables can be created for a single data file. Schemas are stored in Hive’s metastore. As a result, obtaining the result for the appropriate data is quite simple.

- What does it indicate in Hive when tables are skewed?

Tables with skewed values are those in which values appear repeatedly. The greater the skewness, the more they repeat.

A table can be classed as SKEWED while being created in Hive. By doing so, the values will be written to different files first, and then to a separate file with the remaining data.

- What are the different types of collections available in Hive?

The following collections/data kinds are available in Hive:

- Array

- Map

- Struct

- Union

- What Hive table-creation functions are available?

The following are some of Hive’s table-creation functions:

- Explode(array)

- Explode(map)

- JSON tuple()

- Stack()

Skills, Responsibilities, and Salary- IBM Data Engineer

Skills to become an IBM Data Engineer

Aside from a solid foundation in software engineering, data engineers must be fluent in programming languages used for statistical modeling and analysis, data warehousing solutions, and data pipeline development.

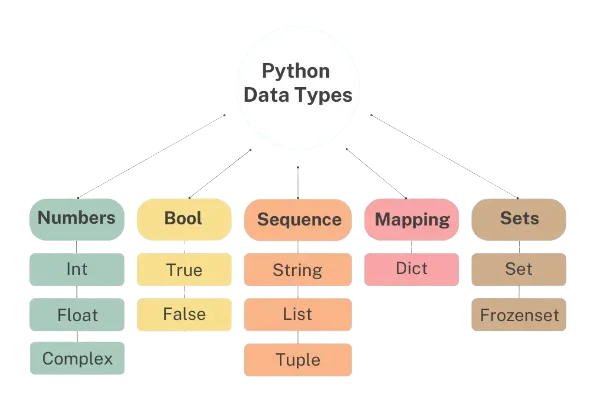

Database management systems (SQL and NoSQL). SQL is the industry standard for creating and administering relational database systems (tables that consist of rows and columns). NoSQL databases are non-tabular and can take the form of a graph or a document, depending on their data model. Data engineers must be able to use database management systems (DBMS), which are software applications that give an interface to databases for the storing and retrieval of information.

Solutions for Data Warehousing Massive amounts of current and historical data are stored in data warehouses for query and analysis. This information is derived from a variety of sources, including a CRM system, accounting software, and ERP software. The company then uses the data for reporting, analytics, and data mining. The majority of organizations require entry-level engineers to be conversant with Amazon Web Services (AWS), a cloud services platform that includes a whole ecosystem of data storage technologies.

ETL Software. ETL (Extract, Transfer, Load) describes how data is obtained (extracted) from a source, transformed (transformed), and stored (loaded) in a data warehouse. This method employs batch processing to assist users in analyzing data relevant to a specific business challenge. The ETL collects data from multiple sources, applies business rules to the data, and then puts the transformed data into a database or business intelligence platform where it can be utilized and accessed by everyone in the company.

Machine Learning. Machine learning algorithms, often known as models, assist data scientists in making predictions from current and past data. Data engineers simply require a rudimentary understanding of machine learning to better understand the needs of a data scientist (and, by implication, the needs of the company), get models into production, and construct more accurate data pipelines.

Data APIs. An API is a data access interface that software programs utilize to access data. It enables two apps or devices to interact with one another for the purpose of performing a certain job. Web applications, for example, employ API to connect the user-facing front end to the back-end functionality and data. An API allows an application to read the database, get information from the necessary tables in the database, process the request, and deliver an HTTP-based response to the web template, which is subsequently shown in the web browser when a request is made on a website. APIs are created by data engineers in databases to allow data scientists and business intelligence analysts to query the data.

Python, Java, and Scala programming languages. Python is the most widely used computer language for statistical analysis and modeling. Java is frequently used in data architecture frameworks, and the vast majority of their APIs are written in Java. Scala is a Java language extension that is compatible with Java since it runs on the JVM (a virtual machine that enables a computer to run Java programs).

Understanding the fundamentals of distributed systems. One of the most crucial data engineer skills is Hadoop fluency. The Apache Hadoop software library provides a platform for distributed processing of massive data volumes across computer clusters using simple programming techniques. It is intended to grow from a single server to thousands of computers, each of which provides local computing and storage. Apache Spark, the most frequently used data science programming tool, is developed in the Scala programming language.

Here are a few job listings from IBM that you can check out and get a better idea of their requirements as well as your responsibilities.

- IBM Data Engineer: Business Intelligence

- IBM Data Engineer: Data Warehouse

- IBM Data Engineer: Data Integration

- IBM Data Engineer: Data Modeling

- IBM Data Engineer: Big Data

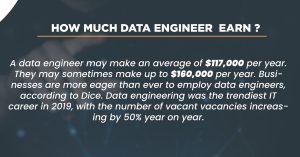

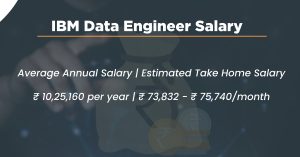

IBM Data Engineer Salary

At par with the average Data Engineer Salary in Software Product Companies. For people with less than one year of experience to ten years of experience, the average IBM Data Engineer pay in India is 10.3 Lakhs per year. The annual compensation for an IBM Data Engineer ranges from 4.6 Lakhs to 18 Lakhs. Salary estimates are based on 723 salary reports from IBM employees.

Some Final Tips for becoming an IBM Data Engineer

- Have a thorough understanding of any programming language of your choice.

- Practice enough coding questions to be ready for the interview.

- Make sure you’re ready to answer questions about the projects you’ve worked on throughout your professional and academic careers.

- Find and prepare responses to frequently asked interview questions.

- Participate in mock interviews to learn how to respond quickly to technical questions.

- Answer the questions truthfully, without resorting to deception to impress the interviewers.

Frequently Asked Questions

What is the role of a data engineer at IBM?

A Data Engineer understands how to apply technology to address big data challenges and can design large-scale data processing systems for the organization. Data engineers create, maintain, test, and analyze big data solutions within enterprises

What is a data engineer’s salary?

Data Engineer salaries in India range from 3.3 Lakhs to 20.2 Lakhs per year, with an average yearly pay of 8.2 Lakhs. Estimated Wages are based on 9k salaries from Data Engineers.

Who earns more data analysts or data engineers?

A data analyst’s average annual pay is little about $59000. A data engineer may make up to $90,8390 per year, while a data scientist can make up to $91,470 per year. At first glance, the figures of a data engineer and data scientist may not appear to differ significantly.

What does a data engineer actually do?

Data engineers design systems that gather, handle, and turn raw data into useable information for data scientists and business analysts to comprehend in a range of scenarios. Their ultimate objective is to make data available so that businesses may utilize it to analyze and enhance their performance.

Before applying to a large firm such as IBM, make sure your resume is up to date. Include all of your relevant talents and certifications, whether they are software-related or business-related. After deciding on whatever position you want, you may apply online, just like any other job application.